Incentives or Obligations? The U.S. Regulatory Approach to Voluntary AI Governance Standards

By FPF Legal Intern Rafal Fryc

As artificial intelligence gets increasingly deployed across every sector of the economy, regulators find themselves grappling with a fundamental challenge: how to govern a technology that defies traditional regulatory frameworks and changes faster than legislation can keep pace. One increasingly common approach can be found outside the text of statutes, where state legislatures are pointing developers and deployers toward established voluntary governance frameworks like NIST’s AI Risk Management Framework or ISO 42001. This shift toward incorporating non-binding technical standards into legal requirements represents more than just regulatory convenience – it is creating a new legal regime in which voluntary industry guidelines are influencing everything from negligence determinations and punitive damage calculations to affirmative defenses for regulatory actions. Understanding how these soft law approaches are influencing legal expectations has become essential for anyone building, deploying, or governing AI systems.

This blog post highlights:

- The Growth of AI Laws Utilizing Voluntary Standards: Colorado, Texas, California, New York and Montana have each taken different approaches to incorporating voluntary AI standards into law, creating a landscape where compliance with the same frameworks carries different legal weight and protection.

- The Proposed Laws and Their Application: Pending legislation across multiple states is building on these existing models, with frontier model bills expanding California’s approach, liability bills adopting Texas-style safe harbors, and ADMT bills blending Colorado’s hybrid of mandates and affirmative defenses.

- The Real Effect on Litigation: Even absent statutory requirements, courts are already using frameworks like NIST’s AI RMF to define the standard of care in negligence and strict liability cases, meaning compliance with voluntary standards is effectively becoming a baseline for reasonable conduct regardless of whether a jurisdiction has passed AI-specific legislation.

The Growth of AI Laws Utilizing Voluntary Standards

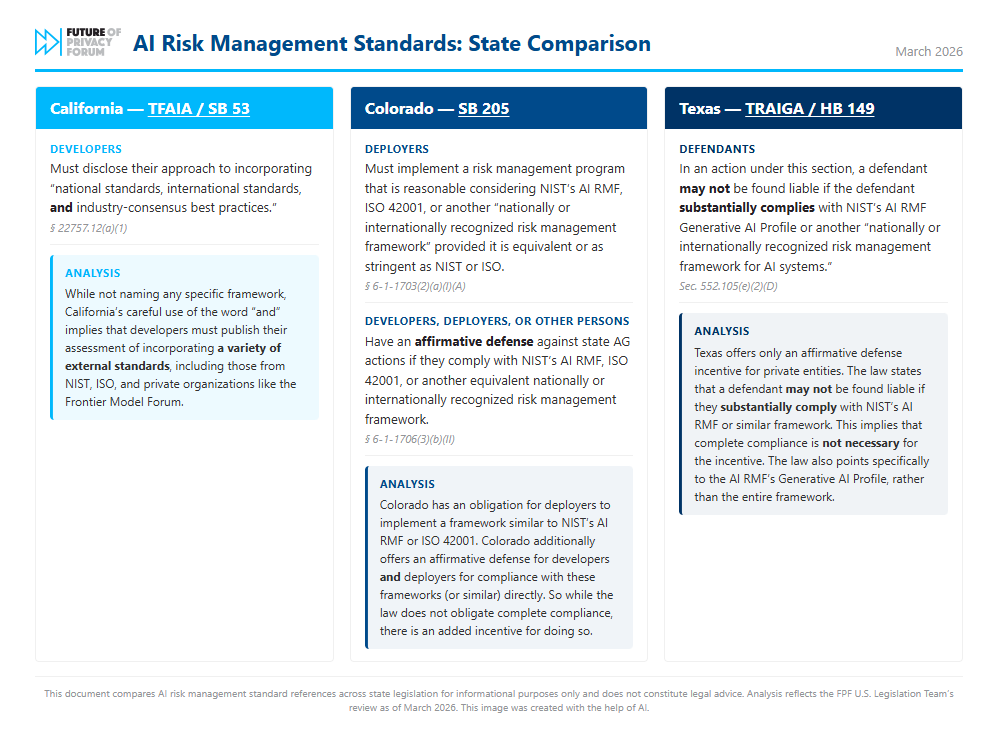

Colorado’s AI Act (SB 205), prior to the revised policy framework, was the first in the U.S. to require deployers to implement a “risk management policy and program” that aligns with NIST’s AI RMF, ISO 42001, or another “nationally or internationally recognized risk management framework for artificial intelligence systems.”1 On top of required implementation, Colorado offered an affirmative defense to deployers and developers for compliance with these frameworks. Although Colorado was the first to introduce such provisions in the AI space, mentions of external standards in the AI Act were removed by the Colorado AI Policy Working Group in their latest proposed revisions to the Act. Texas later came into the scene with the Texas Responsible Artificial Intelligence Governance Act (TRAIGA), also creating an affirmative defense for developers or deployers that comply with NIST’s AI RMF or another nationally or internationally recognized risk management framework for AI systems.2 Most recently, California’s Transparency in Frontier Artificial Intelligence Act (TFAIA) requires developers to disclose whether and to what extent they incorporate “national standards, international standards, and industry-consensus best practices.”3 New York’s RAISE Act (A 9449) takes a similar approach to California, requiring developers to disclose how they “handle” incorporating external standards. Montana (SB 212) also passed a narrow law, requiring deployers to implement a risk management framework that considers external standards when AI is deployed in critical infrastructure.

While Texas takes an incentive-based approach, by offering compliance with a non-binding framework as an affirmative defense, California requires that deployers or developers consider and publish their approach to risk management frameworks that align with, or are substantially similar to nationally/internationally recognized standards. Colorado originally occupied a complicated middle ground, mandating adherence for deployers’ risk management while simultaneously offering an affirmative defense from state AG actions for developers, deployers and “other persons.” As with many laws surrounding emerging technologies, the approach is fragmented, where entities utilizing voluntary AI governance frameworks are subject to varying degrees of liability and protection.

The Proposed Laws and Their Application

Nonetheless, the approach appears to be gaining momentum, particularly with proposed legislation regarding frontier models, liability, and automated decisionmaking. All take language from existing bills to require or encourage developers and deployers to have a written policy that takes into account NIST’s AI RMF or a similar framework.4

Frontier Model Bills

Bills focusing on frontier models either copy or expand California’s approach. While California only required developers to publish their approach to external standards, some bills require developers and deployers to implement a framework that incorporates the same standards. However, bills under this category notably do not refer to specific standards like NIST or ISO, instead opting for the more general terms “industry-consensus best practices” and “national/international standards.” Within this category, the bills fall under two approaches: either mandating a single framework that incorporates/considers external standards or mandating both a public safety and a separate child protection plan that does the same. Bills in the first category include Illinois SB 3312 and Illinois HB 4799. Interestingly, both bills require any amendments or rulemakings made pursuant to the statute to also consider the same external standards. Bills in the second category include Illinois SB 3261, Utah HB 286, Tennessee SB 2171, and Nebraska LB 1083. These bills not only focus on frontier model developers, targeting chatbot providers as well.

Liability Bills

Bills focusing on liability typically follow Texas’ approach, where developers and deployers are given a safe harbor from product liability based litigation if they implement external standards in various places in the AI lifecycle. Bills in this category include Illinois SB 3502/SB 3590, Maryland HB 712, and Vermont H 792. The requirements to achieve safe harbor differ for developers and deployers, where developers must conduct “testing, evaluation, verification, validation, and auditing of that system consistent with industry best practices” and also submit a data sheet to the state Attorney General that includes:

- “Information on the intended contexts and uses of the artificial intelligence system in accordance with industry best practices;

- Information regarding the datasets upon which the artificial intelligence system was trained, including sources, volume, whether the dataset is proprietary, and how the datasets further the intended purpose of the product;

- Accounting of foreseeable risks identified and steps taken to manage them consistent with industry best practices; and

- Results of red-teaming testing and steps taken to mitigate identified risks, consistent with industry best practices.”

Deployer requirements for safe harbor are comparatively relaxed, where the bills mandate a risk management framework that incorporates external standards.

ADMT Bills

Bills focusing on ADMT follow Colorado’s original approach made prior to the revised policy framework, where there are both requirements to implement these standards and safe harbors from litigation to encourage doing so. With Washington’s HB 2157, the bill presumes conformity with the statute if the developer or deployer follows NIST’s AI RMF or ISO 42001. The bill also offers a rebuttable presumption to deployers if they implement a risk management framework that conforms to NIST, ISO, or another standard of similar rigor. New York’s S 1169, on the other hand, requires developers and deployers to implement a risk management policy that conforms with NIST’s AI RMF or another standard designated by the state’s Attorney General. This bill diverges from the others by granting the state Attorney General power to name which standards would qualify under the statute, as opposed to the majority of other bills which leave that determination unanswered.

The Real Effects on Litigation

Industry standards like NIST’s AI RMF can also be used by courts to determine whether companies exercised reasonable care, even when no statute requires their adoption. This judicial reliance on voluntary standards follows established patterns from product liability and negligence cases, where courts have long looked to industry practices to define standards of care. In AI litigation, these frameworks can emerge as critical evidence in three areas: establishing the duty of care in negligence claims, determining defects in strict liability cases, and assessing good faith conduct when calculating punitive damages. The result is that compliance with non-binding standards can determine liability regardless of whether a jurisdiction has AI-specific legislation.

Product liability in AI litigation can be split into two categories: negligence cases and strict liability cases. Negligence cases depend on whether the defendant had a duty of care to the plaintiff and if that duty was breached. A duty of care’s existence depends on many factors, including industry standards. Many courts have recognized that “[e]vidence of industry standards, customs, and practices is ‘often highly probative when defining a standard of care.”5 Other courts have also concurred that “advisory guidelines and recommendations, while not conclusive, are admissible as bearing on the standard of care in determining negligence.”6 Openly complying with industry standards can provide an objective input into an otherwise subjective determination.

Strict liability takes a different approach by automatically placing liability in cases of dangerous animals, ultrahazardous activities, and product defects. Although not yet established, harm caused by AI would most likely qualify under the product defect theory, which would make an AI developer liable if they failed to provide adequate warning or if the product is “defective.”7 The current wave of chatbot litigation points to this approach, with various plaintiffs pursuing this avenue.8 In determining what qualifies as “adequate warning” or whether a product is “defective,” courts often look to industry standards. Although a minority of states do not consider industry standards for strict liability,9 the majority of states and federal courts do.10 Following widely adopted established standards, like those by NIST and ISO, can prove dispositive in most strict liability cases relating to AI.

In terms of punitive damages, both state and federal courts have looked favorably on adherence to industry standards when determining if the defendant acted in good faith; however, there have been exceptions:

- A manufacturer followed industry standards but actively resisted safer designs based on economic considerations.11

- A company followed industry standards but knew about a remaining risk and failed to warn and remedy the risk.12

- A manufacturer followed industry standards but knowingly engaged in conduct that endangered people.13

While following industry standards has been beneficial for determining “good faith” when assessing punitive damages, it mostly serves as the baseline for expected behavior. Readily demonstrating adherence to external standards is necessary to avoiding hefty fines.

Whether through statutory requirements in Colorado and California, affirmative defenses in Texas, or judicial interpretation of “reasonable care” and “good faith” in liability cases, frameworks like NIST’s AI RMF and ISO 42001 are transitioning from voluntary best practices to de facto legal requirements. For AI developers and deployers, the message is clear: application of these standards is becoming less optional and more essential for managing legal risk. Companies that wait for explicit regulatory mandates may find themselves already behind the curve, and organizations should begin implementing recognized risk management frameworks now, not because the law explicitly requires it everywhere, but because the legal system is already treating these standards as the baseline for reasonable conduct.

Beyond the defensive calculus of avoiding penalties and litigation exposure, there is an equally compelling case for adopting external standards: they are simply good governance. These frameworks are road-tested guides for building AI systems that produce accurate, ethical, and trustworthy outcomes. Organizations that internalize them are better positioned to gain customer trust, regardless of what any particular state legislature has done. Given the near impossibility of creating compliance programs that anticipate every nuance of every emerging AI law across fifty states, demonstrating a genuine, documented commitment to robust governance gives regulators reason to extend good faith when questions arise. An organization that can point to systematic, principled governance processes is better positioned in a regulatory conversation than one scrambling to reverse engineer compliance after the fact. The growing statutory references to NIST and ISO standards are a development worth watching closely but, the stronger argument for adoption may be the proactive one: that these frameworks represent a genuine commitment to getting AI governance right, not merely a hedge against enforcement risk.

Design elements of the above image was generated with the assistance of AI and reviewed by FPF.

- Colo. Rev. Stat. Ann. § 6-1-1703. ↩︎

- 2025 Tex. Sess. Law Serv. Ch. 1174, Sec. 552.105(e)(2)(D) (H.B. 149). ↩︎

- Cal. Bus. & Prof. Code § 22757.12. ↩︎

- H.B. 286, 13-72b-102(1)(a) (Utah 2026); H.B. 4705, Sec. 15(a)(3)(A), 104th Gen. Assem. (Ill. 2026); S.B. 2171, 68-107-103(a)(1)(G), 114th Gen. Assem. (Tenn. 2026); N.Y. S8828 § 1421(a) (2026). ↩︎

- Elledge v. Richland/Lexington Sch. Dist. Five, 341 S.C. 473 (Ct. App. 2000). ↩︎

- Cook v. Royal Caribbean Cruises, Ltd., No. 11-20723-CIV, 2012 WL 1792628, at *3 (S.D. Fla. May 15, 2012). ↩︎

- Restatement (Second) of Torts § 402A (Am. L. Inst. 1965). ↩︎

- Garcia v. Character Techs., Inc., No. 6:24-cv-1903-ACC-DCI, 2025 U.S. Dist. LEXIS 215157 (M.D. Fla. Oct. 31, 2025). ↩︎

- Sullivan v. Werner Co., 253 A.3d 730 (2021). ↩︎

- Joshua D. Kalanic et al., AI Soft Law and the Mitigation of Product Liability Risk, CTR. FOR L., SCIENCE, & INNOVATION, ARIZONA STATE UNIVERSITY (Jul. 2021), https://papers.ssrn.com/sol3/papers.cfm?abstract_id=3909089 (“Yet, many states still allow industry standards to be presented as evidence because such standards can help show the reasonableness and adequacy of the design.”) ↩︎

- Gen. Motors Corp. v. Moseley, 447 S.E.2d 302 (1994). ↩︎

- Flax v. DaimlerChrysler Corp., 272 S.W.3d 521 (Tenn. 2008). ↩︎

- Uniroyal Goodrich Tire Co. v. Ford, 461 S.E.2d 877, 880 (1995). ↩︎