Biometric technology has long been used for security and law enforcement purposes such as national security watch lists, passport controls, criminal fingerprint databases, and immigration processing. Now, however, the private sector increasingly uses these systems as a verification method for authentication that previously required a PIN or password. Apple’s decision to include a fingerprint scanner in the iPhone in 2013 brought new public awareness to possible non-law-enforcement applications of biometric technologies, and the company’s shift to facial recognition access in the most recent models further normalized the concept. Biometric technology continues to be adopted in many sectors, including financial services, transportation, health care, computer systems and facility access, and voting. In many cases, this technology is more efficient, less expensive, and easier to use than traditional alternatives, while also eliminating the need for passwords, which are broadly recognized as an insufficiently secure safeguard for user data. However, as with any digital system, there are privacy concerns around the collection, use, storage, sharing, and analysis of the data that are generated by these systems.

Featured

Top Six Major Privacy Enforcement Trends: A U.S. Legislation Retrospective

Enforcement activity intensifies as U.S. consumer privacy laws continue to evolve and come into effect. In 2023 and 2024 alone, there have been dozens of enforcement actions at the U.S. federal and state levels, some of which reveal or touch on significant throughlines for privacy policy issues, such as what constitutes a privacy violation or […]

Old Laws & New Tech: As Courts Wrestle with Tough Questions under US Biometric Laws, Immersive Tech Raises New Challenges

Extended reality (XR) technologies often rely on users’ body-based data, particularly information about their eyes, hands, and body position, to create realistic, interactive experiences. However, data derived from individuals’ bodies can pose serious privacy and data protection risks for people. It can also create substantial liability risks for organizations, given the growing volume of lawsuits […]

Knowledge is Power: The Future of Privacy Forum launches FPF Training Program

“An investment in knowledge always pays the best interest”–Ben Franklin Let’s make 2023 the year we invest in ourselves, our teams, and the knowledge needed to best navigate this dynamic world of privacy and data protection. I am fortunate to know many of you who will read this blog post, but for those who I […]

Judge declares Buenos Aires’ Fugitive Facial Recognition System Unconstitutional

On September 7, a trial judge declared the implementation of the Fugitive Facial Recognition System (SRFP, for its name in Spanish) by the Government of the City of Buenos Aires unconstitutional. The decision set an important precedent for risks associated with privacy and intimacy in public spaces in the context of public surveillance for law […]

When is a Biometric No Longer a Biometric?

In October 2021, the White House Office of Science and Technology (OSTP) published a Request for Information (RFI) regarding uses, harms, and recommendations for biometric technologies. Over 130 entities responded to the RFI, including advocacy organizations, scientists, experts in healthcare, lawyers, and technology companies. While most commenters agreed on core concepts of biometric technologies used […]

Five Things Lawyers Need to Know About AI

Lawyers are trained to respond to risks that threaten the market position or operating capital of their clients. However, when it comes to AI, it can be difficult for lawyers to provide the best guidance without some basic technical knowledge. This article shares some key insights from our shared experiences to help lawyers feel more at ease responding to AI questions when they arise.

Brain-Computer Interfaces: Privacy and Ethical Considerations for the Connected Mind

BCIs are computer-based systems that directly record, process, analyze, or modulate human brain activity in the form of neurodata that is then translated into an output command from human to machine. Neurodata is data generated by the nervous system, composed of the electrical activities between neurons or proxies of this activity. When neurodata is linked, or reasonably linkable, to an individual, it is personal neurodata.

Now, On the Internet, EVERYONE Knows You’re a Dog

Digital identity systems vary in complexity. At its most basic, a digital ID would simply recreate a physical ID in a digital format, whereasa fully integrated digital identity system would provide a platform for a complete wallet and verification process, usable both online and in the physical world.

Five Top of Mind Data Protection Recommendations for Brain-Computer Interfaces

By Jeremy Greenberg, [email protected] and Katelyn Ringrose [email protected]. Key FPF-curated background resources – policy & regulatory documents, academic papers, and technical analyses regarding brain-computer interfaces are available here. Recently, Elon Musk livestreamed an update for Neuralink—his startup centered around creating brain-computer interfaces (BCIs). BCIs are an umbrella term for devices that detect, amplify, and translate […]

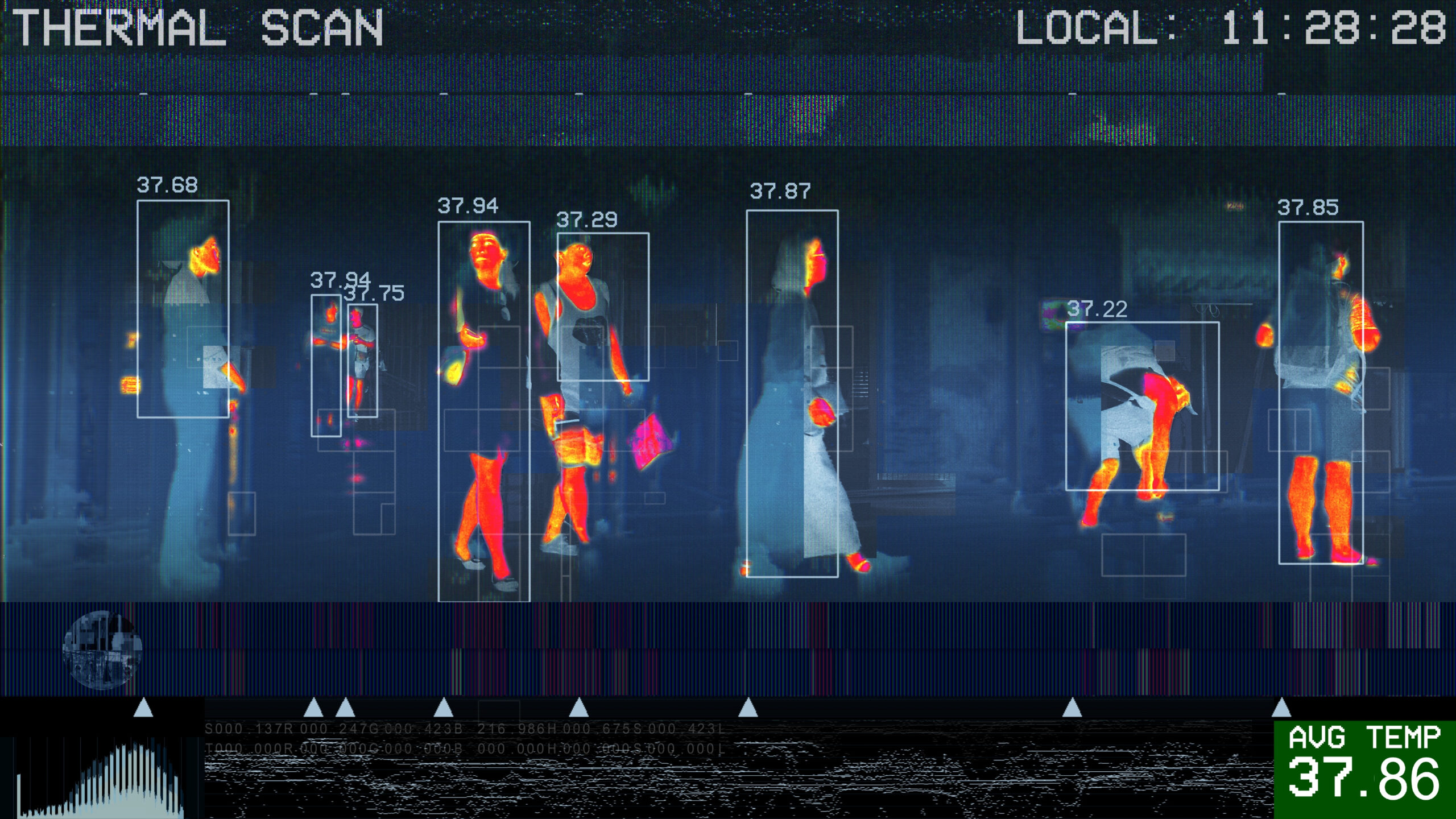

Thermal Imaging as Pandemic Exit Strategy: Limitations, Use Cases and Privacy Implications

Authors: Hannah Schaller, Gabriela Zanfir-Fortuna, and Rachele Hendricks-Sturrup Around the world, governments, companies, and other entities are either using or planning to rely on thermal imaging as an integral part of their strategy to reopen economies. The announced purpose of using this technology is to detect potential cases of COVID-19 and filter out individuals in […]