Contextualizing the Proposed SECURE Data Act in the State Privacy Landscape

Special thanks to FPF’s Dr. Gabriela Zanfir-Fortuna, VP of Global Policy, for her contributions to this analysis.

The House Committee on Energy and Commerce’s Republican data privacy working group released their long-awaited comprehensive consumer privacy bill on April 22, titled the “Securing and Establishing Consumer Uniform Rights and Enforcement over Data Act” (SECURE Data Act) (H.R. 8413). Compared to prior federal efforts, the SECURE Data Act closely resembles many of the existing state comprehensive privacy laws—particularly those based on the Washington Privacy Act (WPA) framework—in terms of its structure, terminology, consumer rights, and business obligations.

This blog post provides a detailed overview of the SECURE Data Act, including its scope, provisions, and how it compares to the other state laws based on the WPA framework.

Our key observations:

- Reflects Narrow WPA Baseline: The bill is closest to some of the narrower iterations of the WPA controller/processor framework, such as the laws in Kentucky, Iowa, Tennessee, Utah, and Alabama’s recently enacted law. It does include certain provisions absent from some of the narrowest state frameworks, such as data minimization (not in Iowa or Utah) and anti-discrimination protections (not in Utah). The comparisons to state privacy laws focus on the laws other than the CCPA because they share the same key terms and structure as this bill. We simply note that this bill is consistently narrower and less prescriptive than what is required under the CCPA.

- Adopts Narrow Outlier Provisions: The bill selects particular narrow approaches used by only a handful of states: Virginia’s narrow biometric data definition (which broadly exempts photos, videos, and audio without limiting language), the pseudonymous data exception for consumer opt-out rights (Tennessee, Iowa, Florida, Alabama only), the absence of data protection impact assessments (Iowa, Utah, Alabama only), and no requirement for controllers to recognize opt-out preference signals (although the Secretary of Commerce would be required to conduct a study on the feasibility of such).

- Novel Additions: While narrow overall, the bill includes elements beyond typical state frameworks: a federal data broker registry, classification of all teens’ data (ages 13-16) as sensitive data with parental controls, application to common carriers, and a Code of Conduct certification process (modeled on COPPA safe harbor), providing a rebuttable presumption of compliance. The bill would recognize Global Cross-Border Privacy Rules (CBPR) as an approved code. Only Tennessee has a comparable affirmative defense provision.

- Broad Preemption: The bill’s scope and broad preemption language could preempt state comprehensive privacy laws, sectoral laws (Illinois BIPA, Washington My Health My Data Act, kids’ privacy laws), and data broker laws (California Delete Act or similar registration laws in Texas, Nevada, Oregon, and Vermont). Preemption is not automatic though and would require litigation on a state-by-state basis. Laws like the CCPA/CPRA that cover exempted categories (employee data, B2B data) may prove difficult to fully preempt.

1. Scope

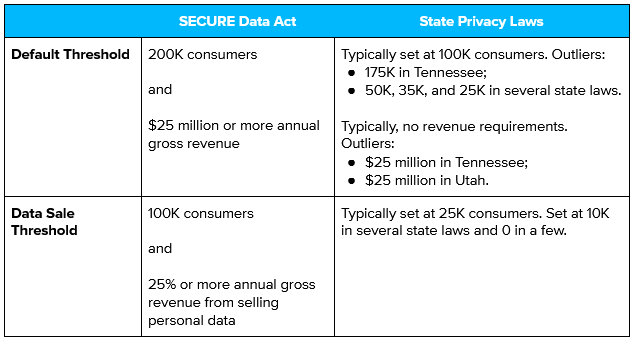

Applicability: The bill would apply to businesses subject to the FTC Act or a common carrier subject to title II of the Communications Act of 1934 that, excluding personal data controlled or processed solely for completing a payment transactions, either (1) have gross annual revenue in excess of $25 million and collect or process the personal data of at least 200K consumers annually or (2) collect and process personal data of at least 100K consumers and derive at least 25% of their annual gross revenue from selling such personal data.

These default and data sale thresholds are structurally similar to how most state comprehensive privacy laws are scoped, but the figures themselves are higher than in any of the states.

Nonetheless, direct comparison is difficult since these thresholds are comparing state laws applicability at 100,000 consumers per state, while the federal bill applies at 200,000 consumers nationally. Thus, for businesses operating across multiple states, the federal threshold may be easier to meet despite the higher absolute number, while the bill’s additional revenue requirement ($25M) could exclude smaller data-intensive entities within scope of many state laws.

Exemptions: Consistent with most of the state laws, this bill includes a variety of entity-level exemptions, such as: federal, state, or local governmental entities (or any entities acting as a processor on behalf of a federal or state governmental entity); financial institutions subject to the Gramm-Leach-Bliley Act (GLBA); HIPAA-covered entities or business associates; nonprofits; and institutions of higher education.

Notable data-level exemptions include: HIPAA-protected health information; health records; personal data that may impact the creditworthiness, credit standing, character, or general reputation of a consumer and is collected or disclosed by a consumer reporting agency or a furnisher engaged in activities subject to the Fair Credit Reporting Act (FCRA); and information subject to other laws such as the Drivers Privacy Protection Act (DPPA), the Family Educational Rights and Privacy Act (FERPA), and GLBA. As mentioned above, the bill also broadly exempts “publicly available information.” This is defined consistently with many state privacy laws as information that (1) is lawfully made available through government records or (2) “information that a business has reason to believe is lawfully made available to the public through widely distributed media, by the consumer, or by a person to whom the consumer has disclosed the information, unless the consumer has restricted the information to a specific audience.” There are also exceptions for deidentified and pseudonymous data, both of which are defined in the bill.

One point of comparison with the state legislative landscape is the distinction between entity- and data-level exemptions. The newer and recently amended state laws have tended to eschew entity-level exemptions, particularly under GLBA and HIPAA, in favor of data-level exemptions. This bill opts for the broader entity-level exemptions. Although financial institutions would be broadly exempted from the bill, Congress is working on financial privacy as well. The SECURE Data Act was jointly released alongside the House Committee on Financial Services’ GUARD Financial Data Act, which would update GLBA to strengthen financial privacy protections.

In addition to the entity- and data-level exemptions, the bill also includes a variety of exceptions for common business activities, such as cooperation with law enforcement, providing a product or service specifically requested by a consumer or a parent of a consumer, preventing security incidents, engaging in public or peer-reviewed scientific or statistical research in the public interest (subject to safeguards), conducting internal research for product development and improvement, performing internal operations reasonably aligned with consumers’ expectations, and more. These exceptions are common in state privacy laws.

Key Definitions: The definitions in this bill are generally consistent with the majority of state comprehensive privacy laws, including common core definitions such as “consumer” (an individual acting in their individual or household capacity and not in a commercial or employment context), “personal data” (any information that is linked or reasonably linkable to an identified or identifiable natural person, excluding deidentified data or publicly available information); and “sensitive data” (includes sensitive characteristics [such as race and ethnicity, religious belief, sexual orientation, citizenship], genetic and biometric data, and personal data from a child). As discussed below, the bill includes a novel extension of sensitive data to also include teens, defined as individuals aged 13 or over but under 16.

There are a few definitions that, while consistent with some state laws, are among the narrowest versions of those definitions. “Biometric data,” for example, does not include data generated from photographs or video or audio recordings, even if such data is used to identify an individual. The “sale of personal data” is also defined narrowly as the exchange of personal data for “monetary consideration,” whereas many states have extended this to include exchanges “for other valuable consideration.”

2. Consumer Rights

Similar to much of the bill, the consumer rights most closely resemble the narrower iterations of the WPA framework. This bill includes the standard consumer rights to: confirm whether the controller is processing one’s personal data and to access that data; correct inaccuracies in one’s personal data, taking into account the nature of the personal data and the purpose of the processing; delete one’s personal data provided by, or obtained from, the consumer; obtain a copy of one’s personal data in a portable format (if technically feasible); and to opt-out of the processing of one’s personal data for targeted advertising, the sale of personal data, and profiling in furtherance of a solely automated decision that has a legal or similarly significant effect on the consumer. The bill also includes the requirement to obtain consent prior to processing a consumer’s sensitive data as a consumer right rather than a controller obligation.

Although the standard rights are all present, this bill lacks some of the newer rights that have been included in a few of the state laws. For example, Oregon, Delaware, Maryland, and Minnesota all provide a right to know third party recipients of one’s personal data. Minnesota and Connecticut include rights to contest certain adverse profiling decisions. Neither of those rights are in this bill.

Another significant aspect of these rights is the pseudonymous data exemption. Consistent with a few of the state privacy laws, this bill provides that the consumer rights do not apply to pseudonymous data. This arguably narrows the right to opt-out of targeted advertising, if a controller is able to demonstrate that “any information necessary to identify the consumer is kept separately and is subject to appropriate administrative and technical measures to ensure that the personal data is not attributed to an identified or identifiable natural person.” Because the requirement to obtain consent before processing a consumer’s sensitive data is included in the same section as the consumer rights, this also arguably brings pseudonymous data outside the scope of that opt-in consent requirement, which is something that none of the state comprehensive privacy laws have done. However, that is debatable. The pseudonymous data exception provides that “[a]n assertion of any consumer right under section 2 does not apply to pseudonymous data” provided additional protections are met. The word “assertion” implies an affirmative action on the part of the consumer, which may limit the exception to only the consumer rights and not the consent requirement. Furthermore, Section 2, although labeled “Consumer privacy rights,” has distinct subheadings for “(a) Consumer Privacy Rights” and “(b) Consent Required for Processing Sensitive Data.” Although the exception says “any consumer right under section 2,” it could be interpreted to apply only to the rights in subsection 2(a). Nevertheless, pseudonymous data is still subject to a number of protections under the bill, such as data minimization and data security obligations.

Finally, it is notable that this bill does not impose a requirement for controllers to recognize and comply with opt-out preference signals (OOPS) / a universal opt-out mechanism (UOOM). Privacy scholars and advocacy groups have long criticized the control-based model of American privacy law for requiring consumers to affirmatively exercise data rights, which is difficult for consumers to do at scale. A growing number of states—including California, Colorado, Connecticut, Delaware, Maryland, Minnesota, Montana, Nebraska, New Hampshire, New Jersey, Oregon, and Texas—have added the ability for consumers to exercise their opt-out rights on a default basis via a UOOM, such as the Global Privacy Control. While this bill does not require controllers to comply with such signals, it does direct the Secretary of Commerce to conduct a study on the feasibility and efficacy of such tools.

3. Business Obligations

The duties for controllers and processors under this bill largely align with those commonly found in state comprehensive privacy laws. For example, controllers are subject to procedural data minimization and purpose limitation requirements that tie data collection and processing to what is disclosed in a controller’s privacy notice. This is consistent with the approach taken in most of the state privacy laws. A controller must—

- Limit the collection of personal data to what is adequate, relevant, and reasonably necessary in relation to the purposes for which such data is processed, as disclosed to the consumer; and

- Obtain the consumer’s consent to process personal data for purposes that are neither reasonably necessary to nor compatible with the disclosed purposes for which such data is processed, as disclosed to the consumer.

Data security is another requirement that closely tracks the language adopted in almost every state comprehensive privacy law. A controller is required to establish, implement, and maintain reasonable data security practices to protect the confidentiality, integrity, and accessibility of personal data, and such practices must be appropriate to the volume and nature of the personal data at issue. While this is consistent with the language commonly seen in the state laws, the bill deviates slightly by adding a rebuttable presumption that a controller has taken appropriate security measures if the controller (1) complies with a relevant code of conduct (see below) or (2) has data security practices that are “state-of-the-art . . . including such a practice demonstrated by adherence to a widely-accepted technical specification or through a third-party attestation” and its security program “reasonably conforms to a relevant Federal or widely-accepted international risk management framework.”

Controllers are also subject to familiar requirements, such as providing a privacy notice that meets enumerated criteria (including a more novel requirement that the privacy notice disclose if personal data has been transferred to, processed in, stored in, or sold to North Korea, China, Russia, or Iran), a prohibition on processing personal data in violation of civil rights law, and oversight/contractual requirements with respect to their processors.

Notably absent from the bill is a requirement to conduct data protection impact assessments (DPIAs). All of the state comprehensive privacy laws except those in Alabama, Iowa, and Utah require some form of assessment for processing activities that present a heightened risk of harm to consumers. DPIAs are also a core component of most industry best practices.

4. Youth Privacy

As is commonly the case in comprehensive privacy laws, the bill classifies personal data of children (under 13) as sensitive data. However, the bill extends this classification to all teens’ data (aged 13 through 15), requires parental consent for teen data processing and consumer rights, and omits a defined knowledge standard—representing a meaningful departure from typical state (and federal) approaches. Additionally, this bill does not include a duty of care or heightened privacy protections and risk assessment requirements, such as those adopted in Connecticut, Colorado, and Montana.

As discussed above, controllers would be prohibited from processing a consumer’s sensitive data without consent. Consistent with the state laws, there is a clarification that processing the sensitive data of a child (although this is normally restricted to a “known child”) must be done in accordance with the Children’s Online Privacy Protection Act (COPPA). This bill goes further, however, by also requiring the verifiable consent of a parent to process the sensitive data of a teen. In turn, VPC, under the bill, would require direct notice to the parent and unambiguous pre-collection authorization for both initial and subsequent personal data processing or use. Note that “sensitive data of a child” or “sensitive data of a teen” means any personal data of either category because sensitive data includes “personal data collected from a child or teen.”

Furthermore, consumer rights requests on behalf of children and teens would only be exercised by a parent, defined broadly to include natural parents, adoptive parents, legal guardians, and those with legal custody. This is arguably narrower than under the state laws, which often provide that a parent or guardian “may” invoke rights on behalf of the child. Similar to state laws that aim to deconflict consumer rights requests with COPPA requirements, controllers who comply with consumer rights processes under COPPA for children’s data requests would be deemed compliant with consumer rights requirements under this bill. These parental rights with respect to processing teens’ sensitive data and invoking teens’ data rights are a contrast to the state privacy laws. While a growing number of states envision some layer of heightened protections for teens, these laws typically do not require parental consent for processing the data of minors above the age of 12, broadly maintaining teen autonomy over data collection and processing decisions.

The bill notably omits a knowledge standard for child and teen requirements—arguably creating ambiguity regarding when controllers should be on notice to implement age-specific protections and obligations. In contrast, state privacy laws commonly utilize either “actual knowledge” or “actual knowledge or wilful disregards” standards. Note that Congress is concurrently considering several other youth privacy and online safety legislative proposals—including COPPA 2.0 and the App Store Accountability Act—which could inform the future trajectory of this bill’s minor-specific protections and age-based knowledge triggers among related frameworks.

5. Novel Requirements: Data Brokers, Cross-Border Data Transfers, and Codes of Conduct

While the majority of this bill borrows heavily from existing laws in states like Kentucky and Tennessee, it includes a few requirements that are either atypical or completely novel: data broker registration, explicit authority for the Secretary of Commerce to advise on cross-border data transfers, and Codes of Conduct under the law.

First, the bill requires data brokers to register with the FTC, which would then publish a searchable registry. Similar requirements are seen in standalone data broker registry laws in Vermont, California, Nevada, Texas, and Oregon, though each varies in definitions and specific obligations. California’s Delete Act goes the furthest by creating an accessible deletion mechanism that allows a consumer to submit a deletion request to all registered data brokers. Compared to most state data broker laws, however, the bill’s definition of “data broker” is fairly narrow, covering a controller that (i) collects and processes personal data of a consumer who is not a customer or client of the controller or a user, reader, or subscriber of a product or service by the controller and (ii) derives at least 50% of its annual gross revenue from selling personal data. “Data broker” does not include a person acting as a processor.

A novel addition to this bill compared to past iterations of a federal privacy framework are provisions concerning international data flows and the protection of personal data in international commerce. Notably, though, the bill does not propose any restrictions for the transfer of personal data of US persons across borders. On the contrary, the provisions seem to converge towards supporting the international flow of personal data.

The bill would designate the Secretary of Commerce as the President’s principal advisor on international personal data flows and empower the Secretary to: assess foreign governments’ data protection frameworks for alignment with the bill’s protections; develop policy recommendations addressing topics such as the impact of international data flows on consumer rights, economic competitiveness, and U.S. security interests, including mitigation of risks posed to the international flow of personal data by “covered nations” (i.e., North Korea, China, Russia, and Iran); and negotiate international agreements with foreign governments, forums, or political and economic unions to promote cross-border data flows. The latter provision would seemingly cover agreements such as the existing EU/UK/Switzerland – U.S. Data Privacy Framework, opening the possibility for such agreements with other nations or political unions as well (more ambiguous is how the provision would relate to coverage of cross-border data transfers in international trade agreements, like the US-Mexico-Canada Agreement and the US-Japan Digital Trade Agreement). The concept of “assessing” foreign governments’ data protection frameworks for “alignment” with the protections in the bill is reminiscent of “adequacy assessments” in global international data transfers legal regimes. A data protection regime found adequate usually means that personal data can flow with no restrictions to that foreign nation. However, it is not clear to what end the assessment proposed in the bill would be conducted.

Finally, one of the more interesting additions to the bill is codes of conduct. Any controller or processor (or group thereof) would be able to submit an application to the Secretary of Commerce for “approval of a code of conduct that meets or exceeds the requirements . . . under this Act.” Such a code of conduct must include an independent organization to administer the code, assess compliance, and refer would-be violators to the FTC or a state attorney general. There would be a public comment period prior to approval, and the Secretary could later withdraw approval. Controllers or processors in compliance with an approved code of conduct would be entitled to a rebuttable presumption that they are in compliance with the relevant requirements of the Act. These codes of conduct appear loosely comparable to the safe harbor program provided in the COPPA Rule. Notably, a certification by the controller pursuant to the Global Cross Border Privacy Rules system (or any successor system) or a a processor pursuant to the Global Cross Border Privacy Rules System Privacy Recognition for Processors (or any successor system) would be treated as participation in an approved code of conduct. This appears to be inspired by similar provisions in Tennessee’s law and is consistent with efforts across successive U.S. administrations to promote the Global CBPR system.

6. Preemption

With respect to state law, the bill includes broad preemption language that would prohibit any state, or political subdivision of a state, from prescribing, maintaining, or enforcing any law, rule, regulation, or other provision if it “relates to the provisions of this Act.” This broad “relates to” standard could preempt:

- State comprehensive privacy laws;

- Sectoral privacy laws including Illinois BIPA, Washington My Health My Data Act, and kids’ privacy laws; and

- Data broker laws, including the California Delete Act and state data broker registration requirements.

Nonetheless, if this law passed, preemption would not be automatic. State laws would need to be challenged individually in court to determine whether specific provisions conflict with or “relate to” the federal law. For example, the CCPA/CPRA may be more difficult to fully preempt because it covers employee data, B2B data, and applicant data—categories the federal bill exempts.

With respect to federal law, the bill explicitly preserves a number of federal privacy laws and regulations, including COPPA, GLBA, HIPAA, FCRA, and FERPA (to the extent a controller or processor is an educational agency or institution). The Communications Act of 1934 and any FCC regulations promulgated under that law would not apply to a controller or processor with respect to the collection, use, processing, transferring, or security of personal data. This bill would repeal the Video Privacy Protection Act (VPPA), 18 U.S.C. § 2710.

7. Enforcement

Enforcement authority for violations of the bill would be given exclusively to the FTC and state attorneys general. This approach is consistent with all of the state comprehensive privacy laws—but for California’s narrow private right of action (PRA) with respect to data breaches, none of the state comprehensive privacy laws include a PRA.

The FTC would enforce violations of the bill as a violation of a trade regulation rule regarding unfair or deceptive acts or practices under the FTC Act. The FTC would also be authorized to enforce the bill against common carriers under the Communications Act of 1934. Notably, the FTC would be prohibited from enforcing any violation of section 3(c) of the bill, which prohibits a controller from processing personal data in violation of a federal law that prohibits unlawful discrimination against a consumer. Rather, the FTC would be directed to transmit any information indicating a violation of that provision to any agency with authority to initiate an enforcement action concerning it.

The bill also empowers state attorneys general as parens patriae to bring civil actions seeking injunctive relief, damages, restitution, and other legal and equitable relief. Prior to filing an action, a state AG must provide the FTC with written notice of the action, allowing the FTC to intervene in the matter. A state AG would be prohibited from bringing an action against any defendant named in an ongoing civil action under the bill instituted by the FTC or the Attorney General of the United States (note: this is the only reference to the Attorney General of the United States under the bill). Overall, this enforcement structure is conceptually similar to that under COPPA, under which the FTC is the federal enforcement authority but state attorneys general are empowered to pursue actions providing that they notify the FTC, which has the right to intervene. It is notable that the state enforcement authority is limited solely to attorneys general whereas prior efforts such as the ADPPA and the APRA included carve-outs for a “State Privacy Authority of a State” or “an officer or office of a State authorized to enforce privacy or data security laws.” Without a comparable exception, CalPrivacy would not be able to enforce this bill.

The bill includes a right to cure, requiring the FTC or a state AG to provide notice of an alleged violation and allowing 45 days for the controller or processor to cure the violation and promise that no such further violation shall occur. The state privacy laws are split as to whether they include a right to cure—some include no right to cure, some include a permissive cure option at the AG’s discretion, some have a right to cure that will sunset after a set date, and some have a mandatory right to cure with no sunset provision. An additional source of flexibility is the addition of codes of conduct (discussed below) which can entitle a participating controller or processor to a rebuttable presumption of compliance with this bill.

8. Conclusion

It’s a running joke in the privacy community that important bills always drop on Friday afternoons or holidays, so it was no surprise that this bill was released on everyone’s favorite spring holiday—Earth Day. Humor aside, a federal comprehensive privacy law is long overdue, and it is encouraging to see Congress renewing its attention to this topic. It remains to be seen whether the SECURE Data Act will fare better than prior efforts such as the ADPPA and the APRA. Although it appears that significant partisan consensus building has already gone into this process, which could ease the bill’s passage through committee, time is running out for the 119th United States Congress.

What is already evident, however, is how much influence the state comprehensive privacy landscape exerted on this bill as compared to prior efforts. The bill’s key terms, rights, obligations, and overall structure closely resemble that of most of the state comprehensive privacy laws, based on the flexible WPA framework, even if the specific provisions selected hew more closely to the narrower iterations of that framework. We note that a number of the exclusions or omissions in the bill are likely intended to create a margin for negotiations with other members and stakeholders in order to garner support. Although the time frame is uncertain, this bill is the first significant proposal drafted to reflect the current landscape of state laws that already protect a majority of U.S. residents and may reflect a first draft of a framework that eventually becomes law.

FPF will continue to monitor how this bill evolves as it progresses through committee and a broad set of stakeholders across industry, civil society, and academia provide their feedback.