An AI-based computer system can gather data and use that data to make decisions or solve problems – using algorithms to perform tasks that, if done by a human, would be said to require intelligence. The benefits created by AI and machine learning (ML) systems for better health care, safer transportation, and greater efficiencies across the globe are already happening. But the increased amounts of data and computing power that enable sophisticated AI and ML models raise questions about the privacy impacts, ethical consequences, fairness, and real world harms if the systems are not designed and managed responsibly. FPF works with commercial, academic, and civil society supporters and partners to develop best practices for managing risk in AI and ML and assess whether historical data protection practices such as fairness, accountability, and transparency are sufficient to answer the ethical questions they raise.

Featured

FPF Publishes Report Supporting Stakeholder Engagement and Communications for Researchers and Practitioners Working to Advance Administrative Data Research

The ADRF Network is an evolving grassroots effort among researchers and organizations who are seeking to collaborate around improving access to and promoting the ethical use of administrative data in social science research. As supporters of evidence-based policymaking and research, FPF has been an integral part of the Network since its launch and has chaired the network’s Data Privacy and Security Working Group since November 2017.

Beyond Explainability: A Practical Guide to Managing Risk in Machine Learning Models

Beyond Explainability aims to provide a template for effectively managing this risk in practice, with the goal of providing lawyers, compliance personnel, data scientists, and engineers a framework to safely create, deploy, and maintain ML, and to enable effective communication between these distinct organizational perspectives.

Public comments on proposed Open Data Risk Assessment for the City of Seattle

FPF requested feedback from the public on its proposed Draft Open Data Risk Assessment for the City of Seattle. In 2016, the City of Seattle declared in its Open Data Policy that the city’s data would be “open by preference,” except when doing so may affect individual privacy. To ensure its Open Data program effectively protects individuals, Seattle committed to performing an annual risk assessment and tasked FPF with creating and deploying an initial privacy risk assessment methodology for open data.

Unfairness By Algorithm: Distilling the Harms of Automated Decision-Making

Analysis of personal data can be used to improve services, advance research, and combat discrimination. However, such analysis can also create valid concerns about differential treatment of individuals or harmful impacts on vulnerable communities. These concerns can be amplified when automated decision-making uses sensitive data (such as race, gender, or familial status), impacts protected classes, or affects individuals’ eligibility for housing, employment, or other core services. When seeking to identify harms, it is important to appreciate the context of interactions between individuals, companies, and governments—including the benefits provided by automated decision-making frameworks, and the fallibility of human decision-making.

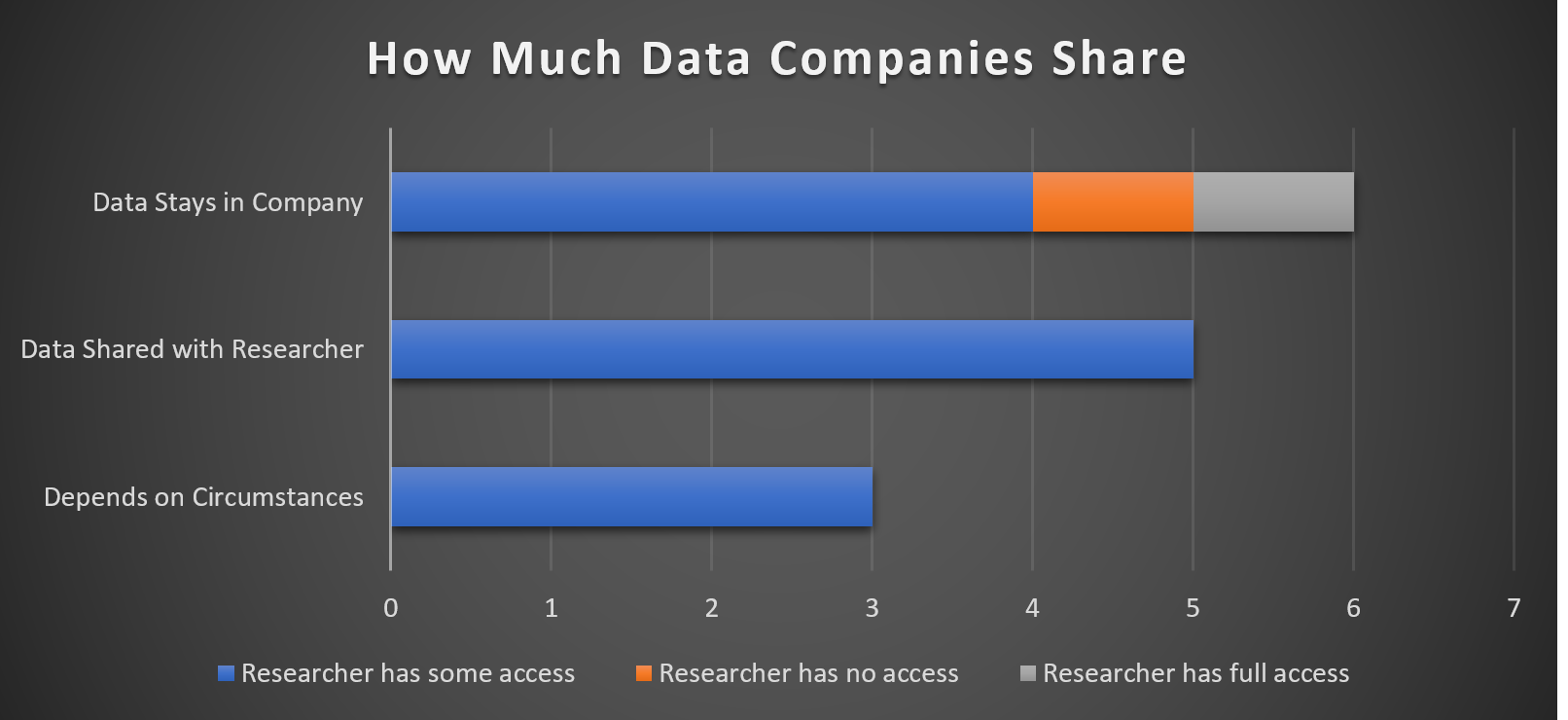

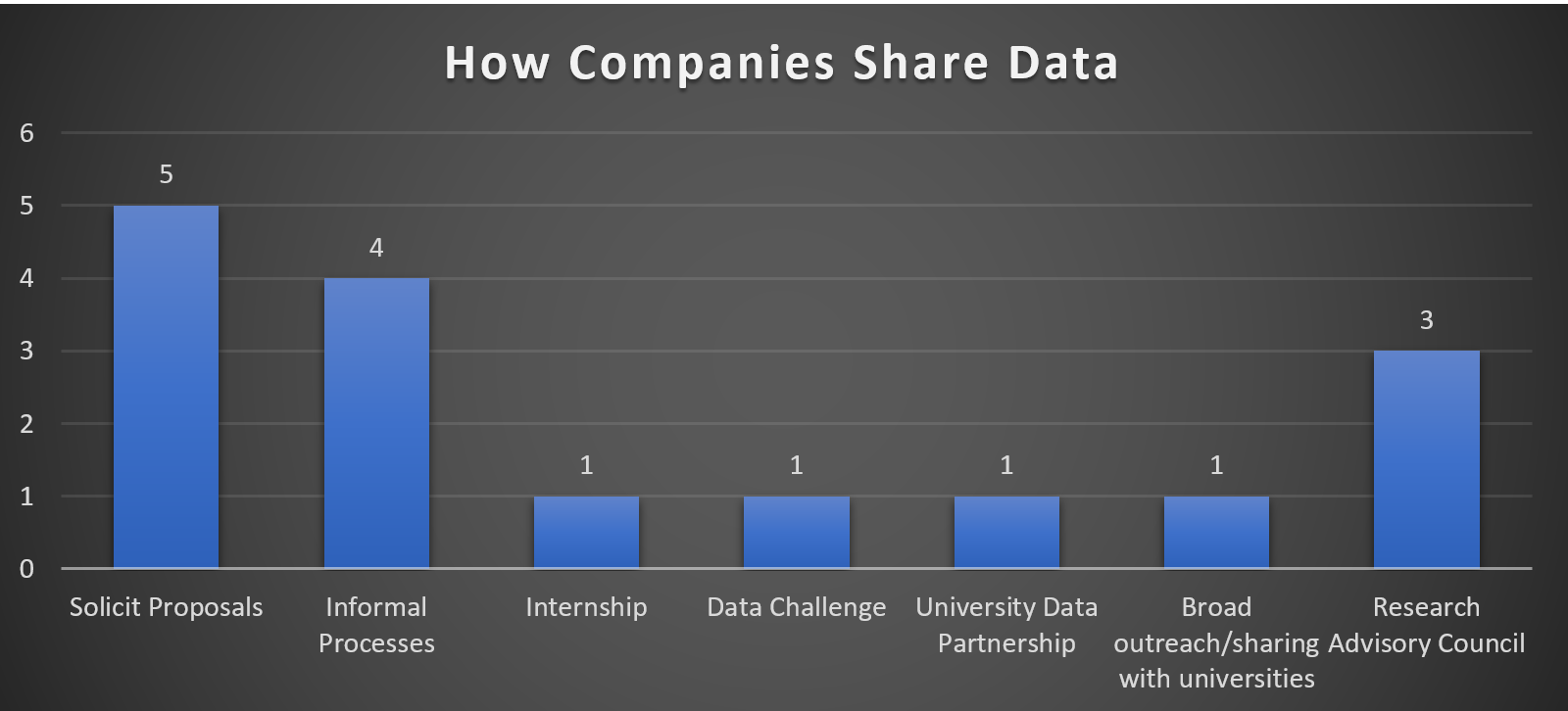

Understanding Corporate Data Sharing Decisions: Practices, Challenges, and Opportunities for Sharing Corporate Data with Researchers

Today, the Future of Privacy Forum released a new study, Understanding Corporate Data Sharing Decisions: Practices, Challenges, and Opportunities for Sharing Corporate Data with Researchers. In this report, we aim to contribute to the literature by seeking the “ground truth” from the corporate sector about the challenges they encounter when they consider making data available for academic research. We hope that the impressions and insights gained from this first look at the issue will help formulate further research questions, inform the dialogue between key stakeholders, and identify constructive next steps and areas for further action and investment.

New Study: Companies are Increasingly Making Data Accessible to Academic Researchers, but Opportunities Exist for Greater Collaboration

Washington, DC – Today, the Future of Privacy Forum released a new study, Understanding Corporate Data Sharing Decisions: Practices, Challenges, and Opportunities for Sharing Corporate Data with Researchers. In this report, FPF reveals findings from research and interviews with experts in the academic and industry communities. Three main areas are discussed: 1) The extent to which leading companies make data available to support published research that contributes to public knowledge; 2) Why and how companies share data for academic research; and 3) The risks companies perceive to be associated with such sharing, as well as their strategies for mitigating those risks.