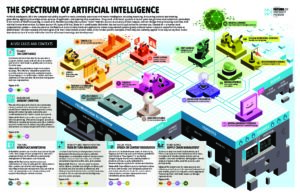

An AI-based computer system can gather data and use that data to make decisions or solve problems – using algorithms to perform tasks that, if done by a human, would be said to require intelligence. The benefits created by AI and machine learning (ML) systems for better health care, safer transportation, and greater efficiencies across the globe are already happening. But the increased amounts of data and computing power that enable sophisticated AI and ML models raise questions about the privacy impacts, ethical consequences, fairness, and real world harms if the systems are not designed and managed responsibly. FPF works with commercial, academic, and civil society supporters and partners to develop best practices for managing risk in AI and ML and assess whether historical data protection practices such as fairness, accountability, and transparency are sufficient to answer the ethical questions they raise.

Featured

FPF Responds to the OMB’s Request for Information on Responsible Artificial Intelligence Procurement in Government

On April 29, the Future of Privacy Forum submitted comments to the Office of Management and Budget (OMB) in response to the agency’s Request for Information (RFI) regarding responsible procurement of artificial intelligence (AI) in government, particularly regarding the intersection of AI tools and systems procurement with other risks posed by the development and use […]

FPF Submits Comments to the Office of Management and Budget on AI and Privacy Impact Assessments

On April 1, 2024, the Future of Privacy Forum filed comments to the Office of Management and Budget (OMB) in response to the agency’s Request for Information on how privacy impact assessments (PIAs) may mitigate privacy risks exacerbated by AI and other advances in technology. The OMB issued the RFI pursuant to the White House’s […]

FPF Submits Comments to the FEC on the Use of Artificial Intelligence in Campaign Ads

On October 16, 2023, the Future of Privacy Forum submitted comments to the Federal Election Commission (FEC) on the use of artificial intelligence in campaign ads. The FEC is seeking comments in response to a petition that asked the Agency to initiate a rulemaking to clarify that its regulation on “fraudulent misrepresentation” applies to deliberately […]

FPF Testifies on Automated Decision System Legislation in California

Last week, on April 8, 2021, FPF’s Dr. Sara Jordan testified before the California House Committee on Privacy and Consumer Protection on AB-13 (Public contracts: automated decision systems). The legislation passed out of committee (9 Ayes, 0 Noes) and was re-referred to the Committee on Appropriations. The bill would regulate state procurement, use, and development […]

FPF Letter to NY State Legislature

On Friday, June 14, FPF submitted a letter to the New York State Assembly and Senate supporting a well-crafted moratorium on facial recognition systems for security uses in public schools.

FPF Comments on the FTC Informational Injury Workshop

On Friday, October 27, 2017, the Future of Privacy Forum filed comments with the Federal Trade Commission in advance of the December 12, 2017 Informational Injury Workshop. The purpose of the workshop is to examine consumer injury in the context of privacy and data security. FPF’s comments focus on describing the harms that can arise from automated decision-making as well as highlighting existing risk-based privacy analyses.