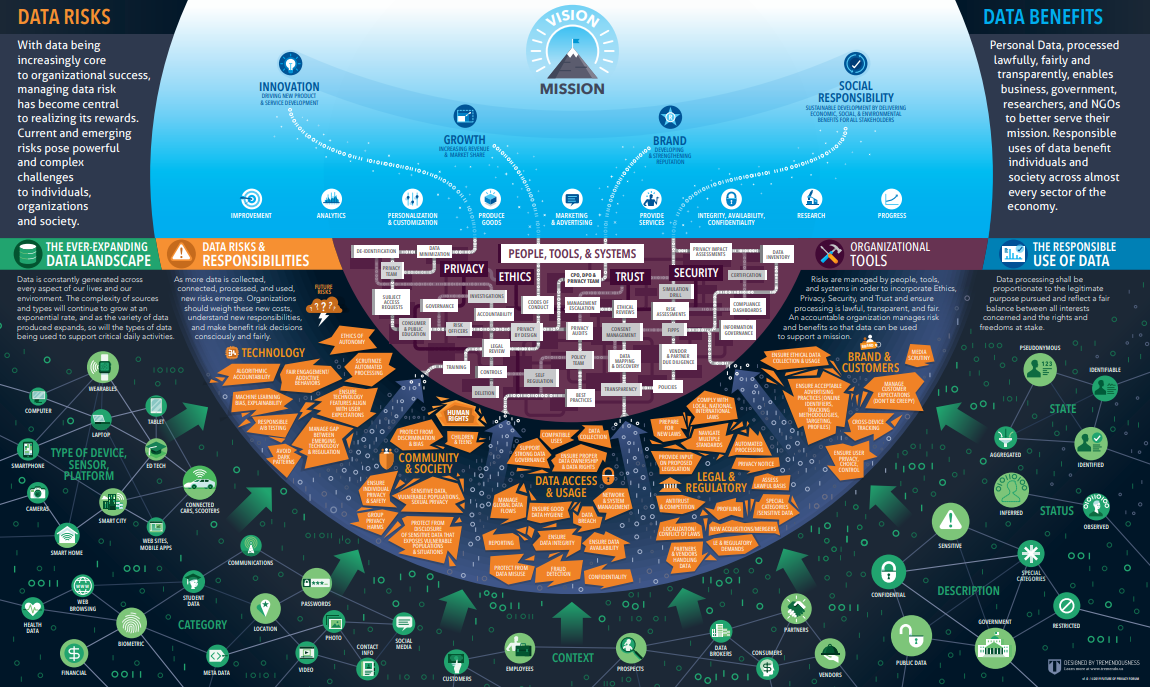

Privacy 2020: 10 Privacy Risks and 10 Privacy Enhancing Technologies to Watch in the Next Decade Report & Infographic

FPF published a white paper co-authored by CEO Jules Polonetsky and hackylawyER Founder Elizabeth Renieris to help corporate officers, nonprofit leaders, and policymakers better understand privacy risks that will grow in prominence during the 2020s, as well as rising technologies that will be used to help manage privacy through the decade. Leaders must understand the basics […]

A Privacy Playbook for Connected Car Data Report

Drivers and passengers expect cars to be safe, comfortable, and trustworthy. Individuals often consider the details of their travels—and the vehicles that take them between their home, the office, a hospital, their place of worship, or their child’s school—to be sensitive, personal data. Read FPF’s Forward in the “A Privacy Playbook for Connected Car Data” […]

Data Protection by Process: How to Operationalise Data Protection by Design for Machine Learning Report

Immuta and the Future of Privacy Forum (FPF) released a working white paper, Data Protection by Process: How to Operationalise Data Protection by Design for Machine Learning, that provides guidance on embedding data protection principles within the life cycle of a machine learning model. Data Protection by Design (DPbD) is a core data protection requirement […]

Comparing Privacy Laws: GDPR v. CCPA Report

In November 2018, OneTrust DataGuidance and FPF partnered to publish a guide to the key differences between the General Data Protection Regulation (GDPR) and the California Consumer Privacy Act of 2018 (CCPA). This updated Guide aims to assist organisations in understanding and comparing the relevant provisions of the GDPR and the CCPA, to ensure compliance with […]

Warning Signs: The Future of Privacy and Security in an Age of Machine Learning Report

FPF is working with Immuta and others to explain the steps machine learning creators can take to limit the risk that data could be compromised or a system manipulated.

The Internet of Things (IoT) and People with Disabilities: Exploring the Benefits, Challenges, and Privacy Tensions Report

FPF released The Internet of Things (IoT) and People with Disabilities: Exploring the Benefits, Challenges, and Privacy Tensions. This paper explores the nuances of privacy considerations for people with disabilities using IoT services and provides recommendations to address privacy considerations, which can include transparency, individual control, respect for context, the need for focused collection and […]

CCPA, face to face with the GDPR: An in depth comparative analysis guide

The General Data Protection Regulation (Regulation (EU) 2016/679) (‘GDPR’) and the California Consumer Privacy Act of 2018 (‘CCPA’) both aim to guarantee strong protection for individuals regarding their personal data and apply to businesses that collect, use, or share consumer data, whether the information was obtained online or offline.

Nothing to Hide: Tools for Talking (and Listening) About Data Privacy for Integrated Data Systems Report

Data-driven and evidence-based social policy innovation can help governments serve communities better, smarter, and faster. Integrated Data Systems (IDS) use data that government agencies routinely collect in the normal course of delivering public services to shape local policy and practice. They can use data to evaluate the effectiveness of new initiatives or bridge gaps between public services and community providers.

Future of Privacy Forum and Actionable Intelligence for Social Policy: ‘Nothing to Hide: Tools for Talking (and Listening) About Data Privacy for Integrated Data Systems’

Washington, DC – Today, Future of Privacy Forum and Actionable Intelligence for Social Policy released Nothing to Hide: Tools for Talking (and Listening) About Data Privacy for Integrated Data Systems. Nothing to Hide provides governments and their partners working to integrate data for policy and program improvement with the necessary tools to lead privacy-sensitive, inclusive engagement efforts. In addition to a narrative step-by-step guide to communication and engagement on data privacy, the toolkit is supplemented with action-oriented appendices, including worksheets, checklists, exercises, and additional resources.

The Privacy Expert’s Guide to AI and Machine Learning Report

Today, FPF announces the release of The Privacy Expert’s Guide to AI and Machine Learning. This guide explains the technological basics of AI and ML systems at a level of understanding useful for non-programmers, and addresses certain privacy challenges associated with the implementation of new and existing ML-based products and services.