The FPF Center for Artificial Intelligence: Navigating AI Policy, Regulation, and Governance

The rapid deployment of Artificial Intelligence for consumer, enterprise, and government uses has created challenges for policymakers, compliance experts, and regulators. AI policy stakeholders are seeking sophisticated, practical policy information and analysis.

The rapid deployment of Artificial Intelligence for consumer, enterprise, and government uses has created challenges for policymakers, compliance experts, and regulators. AI policy stakeholders are seeking sophisticated, practical policy information and analysis.

This is where the FPF Center for Artificial Intelligence comes in, expanding FPF’s role as the leading pragmatic and trusted voice for those who seek impartial, practical analysis of the latest challenges for AI-related regulation, compliance, and ethical use.

At the FPF Center for Artificial Intelligence, we help policymakers and privacy experts at organizations, civil society, and academics navigate AI policy and governance. The Center is supported by a Leadership Council of experts from around the globe. The Council consists of members from industry, academia, civil society, and current and former policymakers.

FPF has a long history of AI-related and emerging technology policy work that has focused on data, privacy, and the responsible use of technology to mitigate harms. From FPF’s presentation to global privacy regulators about emerging AI technologies and risks in 2017 to our briefing for US Congressional members detailing the risks and mitigation strategies for AI-powered workplace tech in 2023, FPF has helped policymakers around the world better understand AI risks and opportunities while equipping data, privacy and AI experts with the information they need to develop and deploy AI responsibly in their organizations.

In 2024, FPF received a grant from the National Science Foundation (NSF) to advance the Whitehouse Executive Order in Artificial Intelligence to support the use of Privacy Enhancing Technologies (PETs) by government agencies and the private sector by advancing legal certainty, standardization, and equitable uses. FPF is also a member of the U.S. AI Safety Institute at the National Institute for Standards and Technology (NIST) where it focuses on assessing the policy implications of the changing nature of artificial intelligence.

Areas of work within the FPF Center for Artificial Intelligence include:

- Legislative Comparison

- Responsible AI Governance

- AI Policy by Sector

- AI Assessments & Analyses

- Novel AI Policy Issues

- AI and Privacy Enhancing Technologies

FPF’s new Center for Artificial Intelligence will be supported by a Leadership Council of leading experts from around the globe. The Council will consist of members from industry, academia, civil society, and current and former policymakers.

FPF Center for AI Leadership Council

The FPF Center for Artificial Intelligence will be supported by a Leadership Council of leading experts from around the globe. The Council will consist of members from industry, academia, civil society, and current and former policymakers.

We are delighted to announce the founding Leadership Council members:

- Estela Aranha, Member of the United Nations High-level Advisory Body on AI; Former State Secretary for Digital Rights, Ministry of Justice and Public Security, Federal Government of Brazil

- Jocelyn Aqua, Principal, Data, Risk, Privacy and AI Governance, PricewaterhouseCoopers LLP

- John Bailey, Nonresident Senior Fellow, American Enterprise Institute

- Lori Baker, Vice President, Data Protection & Regulatory Compliance, Dubai International Financial Centre Authority (DPA)

- Cari Benn, Assistant Chief Privacy Officer, Microsoft Corporation

- Andrew Bloom, Vice President & Chief Privacy Officer, McGraw Hill

- Kate Charlet, Head of Global Privacy, Safety, and Security Policy, Google

- Prof. Simon Chesterman, David Marshall Professor of Law & Vice Provost, National University of Singapore; Principal Researcher, Office of the UNSG’s Envoy on Technology, High-Level Advisory Body on AI

- Barbara Cosgrove, Vice President, Chief Privacy Officer, Workday

- Jo Ann Davaris, Vice President, Global Privacy, Booking Holdings Inc.

- Elizabeth Denham, Chief Policy Strategist, Information Accountability Foundation, Former UK ICO Commissioner and British Columbia Privacy Commissioner

- Lydia F. de la Torre, Senior Lecturer at University of California, Davis; Founder, Golden Data Law, PBC; Former California Privacy Protection Agency Board Member

- Leigh Feldman, SVP, Chief Privacy Officer, Visa Inc.

- Lindsey Finch, Executive Vice President, Global Privacy & Product Legal, Salesforce

- Harvey Jang, Vice President, Chief Privacy Officer, Cisco Systems, Inc.

- Lisa Kohn, Director of Public Policy, Amazon

- Emerald de Leeuw-Goggin, Global Head of AI Governance & Privacy, Logitech

- Caroline Louveaux, Chief Privacy Officer, MasterCard

- Ewa Luger, Professor of human-data interaction, University of Edinburgh; Co-Director, Bridging Responsible AI Divides (BRAID)

- Dr. Gianclaudio Malgieri, Associate Professor of Law & Technology at eLaw, University of Leiden

- State Senator James Maroney, Connecticut

- Christina Montgomery, Chief Privacy & Trust Officer, AI Ethics Board Chair, IBM

- Carolyn Pfeiffer, Senior Director, Privacy, AI & Ethics and DSSPE Operations, Johnson & Johnson Innovative Medicine

- Ben Rossen, Associate General Counsel, AI Policy & Regulation, OpenAI

- Crystal Rugege, Managing Director, Centre for the Fourth Industrial Revolution Rwanda

- Guido Scorza, Member, The Italian Data Protection Authority

- Nubiaa Shabaka, Global Chief Privacy Officer and Chief Cyber Legal Officer, Adobe, Inc.

- Rob Sherman, Vice President and Deputy Chief Privacy Officer for Policy, Meta

- Dr. Anna Zeiter, Vice President & Chief Privacy Officer, Privacy, Data & AI Responsibility, eBay

- Yeong Zee Kin, Chief Executive of Singapore Academy of Law and former Assistant Chief Executive (Data Innovation and Protection Group), Infocomm Media Development Authority of Singapore

For more information on the FPF Center for AI email [email protected]

Featured

Annual DC Privacy Forum: Convening Top Voices in Governance in the Digital Age

FPF hosted its second annual DC Privacy Forum: Governance for Digital Leadership and Innovation on Wednesday, June 11. Staying true to the theme, this year’s forum convened key government, civil society, academic, and corporate privacy leaders for a day of critical discussions on privacy and AI policy. Gathering an audience of over 250 leaders from […]

Future of Privacy Forum Announces Annual Privacy and AI Leadership Awards

New internship program established in honor of former FPF staff Washington, D.C. – June 12, 2025 — The Future of Privacy Forum (FPF), a global non-profit focused on data protection, AI and emerging technologies, announced the recipients of the 2025 FPF Achievement Awards, honoring exceptional contributors to AI and privacy leadership in the public and […]

FPF Experts Take The Stage at the 2025 IAPP Global Privacy Summit

By FPF Communications Intern Celeste Valentino Earlier this month, FPF participated at the IAPP’s annual Global Privacy Summit (GPS) at the Convention Center in Washington, D.C. The Summit convened top privacy professionals for a week of expert workshops, engaging panel discussions, and exciting networking opportunities on issues ranging from understanding U.S. state and global privacy […]

Lessons Learned from FPF “Deploying AI Systems” Workshop

On May 7, 2025, the Future of Privacy Forum (FPF) hosted a “Deploying AI Systems” workshop at the Privacy + Security Academy’s Spring Academy, which took place at The George Washington University in Washington, DC. Workshop participants included students and privacy lawyers from firms, companies, data protection authorities, and regulatory agencies around the world. The […]

FPF Launches Major Initiative to Study Economic and Policy Implications of AgeTech

FPF and University of Arizona Eller College of Management Awarded Grant by Alfred P. Sloan Foundation to Address Privacy Implications, and Data Uses of Technologies Aimed at Aging At Home The Future of Privacy Forum (FPF) — a global non-profit focused on data protection, AI and emerging technologies–has been awarded a grant from the Alfred […]

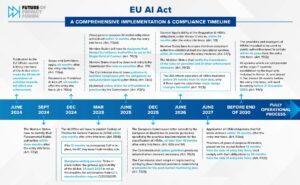

FPF and OneTrust publish the Updated Guide on Conformity Assessments under the EU AI Act

The Future of Privacy Forum (FPF) and OneTrust have published an updated version of their Conformity Assessments under the EU AI Act: A Step-by-Step Guide, along with an accompanying Infographic. This updated Guide reflects the text of the EU Artificial Intelligence Act (EU AIA), adopted in 2024. Conformity Assessments (CAs) play a significant role in […]

South Korea’s New AI Framework Act: A Balancing Act Between Innovation and Regulation

On 21 January 2025, South Korea became the first jurisdiction in the Asia-Pacific (APAC) region to adopt comprehensive artificial intelligence (AI) legislation. Taking effect on 22 January 2026, the Framework Act on Artificial Intelligence Development and Establishment of a Foundation for Trustworthiness (AI Framework Act or simply, Act) introduces specific obligations for “high-impact” AI systems […]

Chatbots in Check: Utah’s Latest AI Legislation

With the close of Utah’s short legislative session, the Beehive State is once again an early mover in U.S. tech policy. In March, Governor Cox signed several bills related to the governance of generative Artificial Intelligence systems into law. Among them, SB 332 and SB 226 amend Utah’s 2024 Artificial Intelligence Policy Act (AIPA) while […]

FPF Releases Infographic Highlighting the Spectrum of AI in Education

To highlight the wide range of current use cases for Artificial Intelligence (AI) in education and future possibilities and constraints, the Future of Privacy Forum (FPF) today released a new infographic, Artificial Intelligence in Education: Key Concepts and Uses. While generative AI tools that can write essays, generate and alter images, and engage with students […]

Why data protection legislation offers a powerful tool for regulating AI

For some, it may have come as a surprise that the first existential legal challenges large language models (LLMs) faced after their market launch were under data protection law, a legal field that looks arcane in the eyes of those enthralled by novel Artificial Intelligence (AI) law, or AI ethics and governance principles. But data protection law […]