An AI-based computer system can gather data and use that data to make decisions or solve problems – using algorithms to perform tasks that, if done by a human, would be said to require intelligence. The benefits created by AI and machine learning (ML) systems for better health care, safer transportation, and greater efficiencies across the globe are already happening. But the increased amounts of data and computing power that enable sophisticated AI and ML models raise questions about the privacy impacts, ethical consequences, fairness, and real world harms if the systems are not designed and managed responsibly. FPF works with commercial, academic, and civil society supporters and partners to develop best practices for managing risk in AI and ML and assess whether historical data protection practices such as fairness, accountability, and transparency are sufficient to answer the ethical questions they raise.

Featured

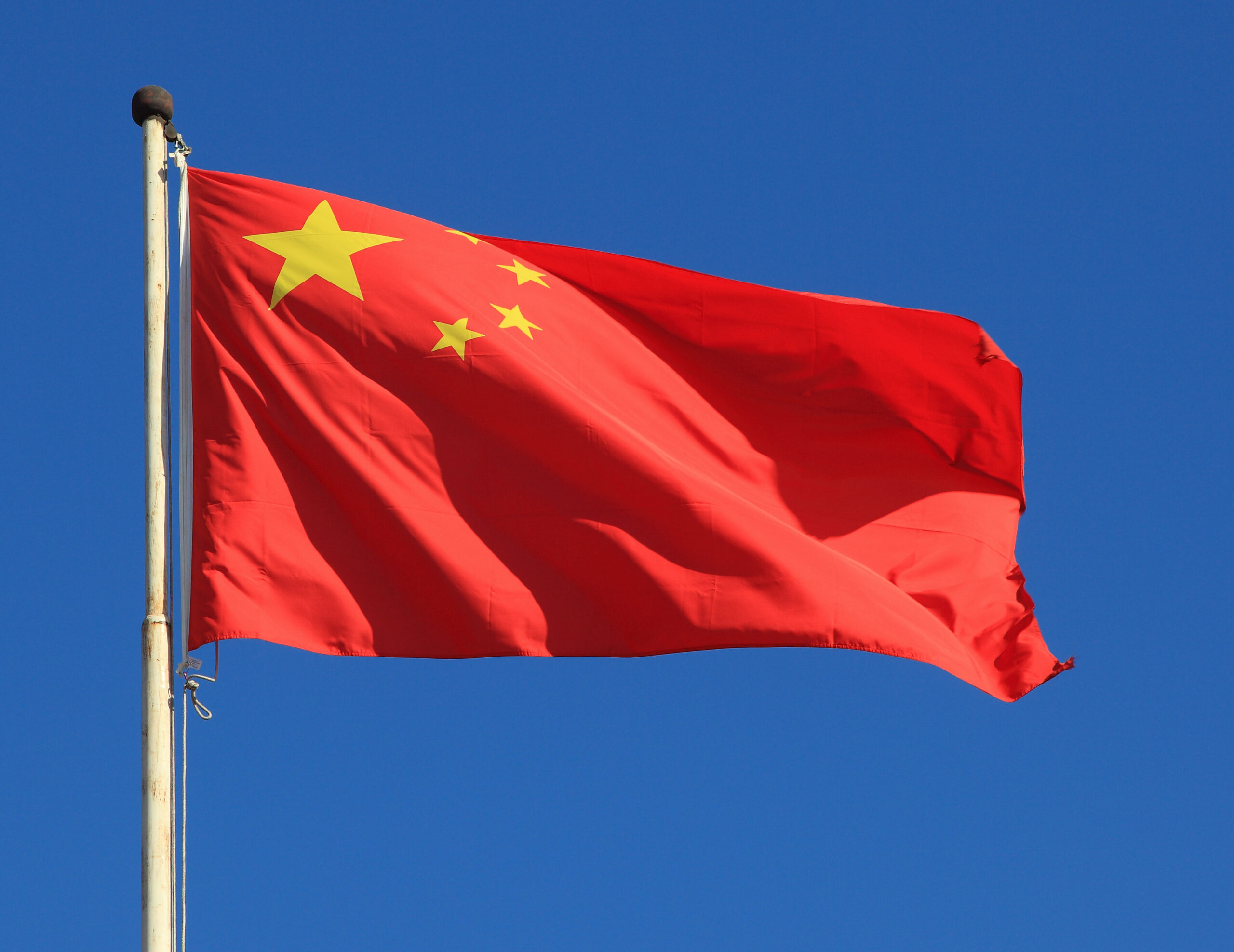

Unveiling China’s Generative AI Regulation

Authors: Yirong Sun and Jingxian Zeng The following is a guest post to the FPF blog by Yirong Sun, research fellow at the New York University School of Law Guarini Institute for Global Legal Studies at NYU School of Law: Global Law & Tech and Jingxian Zeng, research fellow at the University of Hong Kong […]

AI Verify: Singapore’s AI Governance Testing Initiative Explained

In recent months, global interest in AI governance and regulation has expanded dramatically. Many identify a need for new governance and regulatory structures in response to the impressive capabilities of generative AI systems, such as OpenAI’s ChatGPT and DALL-E, Google’s Bard, Stable Diffusion, and more. While much of this attention focuses on the upcoming EU […]

Let’s Look at LLMs: Understanding Data Flows and Risks in the Workplace

Over the last few months, we have seen generative AI systems and Large Language Models (LLMs), like OpenAI’s ChatGPT, Google Bard, Stable Diffusion, and Dall-E, send shockwaves throughout society. Companies are racing to bake AI features into existing products and roll out new services. Many Americans are worrying whether generative AI and LLMs are going […]

Knowledge is Power: The Future of Privacy Forum launches FPF Training Program

“An investment in knowledge always pays the best interest”–Ben Franklin Let’s make 2023 the year we invest in ourselves, our teams, and the knowledge needed to best navigate this dynamic world of privacy and data protection. I am fortunate to know many of you who will read this blog post, but for those who I […]

Understanding Extended Reality Technology & Data Flows: Privacy and Data Protection Risks and Mitigation Strategies

This post is the second in a two-part series. Click here for FPF’s XR infographic. The first post in this series focuses on the key functions that XR devices may feature, and analyzes the kinds of sensors, data types, data processing, and transfers to other parties that power these functions. I. Introduction Today’s virtual (VR), […]

Understanding Extended Reality Technology & Data Flows: XR Functions

This post is the first in a two-part series on extended reality (XR) technology, providing an overview of the technology and associated privacy and data protection risks. Click here for FPF’s infographic, “Understanding Extended Reality Technology & Data Flows.” I. Introduction Today’s virtual (VR), mixed (MR), and augmented (AR) reality environments, collectively known as extended […]

New Infographic Highlights XR Technology Data Flows and Privacy Risks

As businesses increasingly develop and adopt extended reality (XR) technologies, including virtual (VR), mixed (MR), and augmented (AR) reality, the urgency to consider potential privacy and data protection risks to users and bystanders grows. Lawmakers, regulators, and other experts are increasingly interested in how XR technologies work, what data protection risks they pose, and what […]

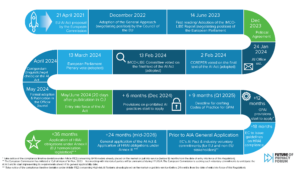

ETSI’s consumer IoT cybersecurity ‘conformance assessments’: parallels with the AI Act

In early September 2021, the European Telecommunications Standards Institute (ETSI) published its European Standard to lay down baseline cybersecurity requirements for Internet of Things (IoT) consumer products (ETSI EN 303 645 V2.1.1). The Standard is a recommendation to manufacturers to develop IoT devices securely from the outset. It also provides an internationally recognized benchmark – […]

Introduction to the Conformity Assessment under the draft EU AI Act, and how it compares to DPIAs

The proposed Regulation on Artificial Intelligence (‘proposed AIA’ or ‘the Proposal’) put forward by the European Commission is the first initiative towards a comprehensive legal framework on AI in the world. It aims to set rules on specific AI applications in certain contexts and does not intend to regulate AI technology in general. The proposed […]

FPF at CPDP LatAm 2022: Artificial Intelligence and Data Protection in Latin America

This summer the first-ever in-person Computers, Privacy and Data Protection Conference – Latin America (CPDP LatAm) took place in Rio de Janeiro on July 12 and 13. The Future of Privacy Forum (FPF) was present at the event, titled Artificial Intelligence and Data Protection in Latin America, participating in two panels and submitting a paper […]